How to Use the Google Search Console as a Beginner

Updated April 18, 2023

Is Your Website Highly Optimized to Welcome Visitors with Open Arms? The Google Search Console is provided to businesses free and has a wealth of tools that help decipher site ranking updates.

Most SEO and SEM professionals have a whole pack of tools they rely on to complete search engine optimization tasks. As the scope of work deepens and some tools increase in cost, SEOs are turning to use Google Webmaster Tools more often.

Table of Contents

- How to Use the Google Search Console as a Beginner

- What is the Google Search Console?

- Google Performance Tab

- Shopping Tab Listings Report

- Google Search Console Video Index Report

- GSC Updates “Submit To Index” To “Request Indexing”

- Search Console Property Sets

- The Search Console HTTPS Report

- Google Search Console’s Top SEO Tools

- Merge your Search Console with AdWords, Analytics, and Looker Studio

- Search Console Voice Search Report: Future Features

- Google’s Beginner Search Console Instructions

In 2015 “Google Webmaster Tools” was renamed and is now called the “Search Console”. However, to date, its URL remains the same. Google continues to release a lot of changes to the Search Console, which makes a more valuable source for search marketing optimization than ever before. Every B2C and B2B business needs to prioritize their marketing strategies. These changes range from cross-domain analytics to new enhancements.

First, here are a few common questions answered.

What is the Google Search Console?

Every online business can sign-up for using the Google Search Console site optimization tools. It is a free service that Google provides to help search professionals monitor, maintain, and troubleshoot a site’s presence in Google Search results. We find it’s core function is to confirm that Google can find and crawl your site. If you fix indexing problems and request re-indexing of new or updated content, Google can recrawl and index your pages faster.

Google has invested heavily in added new features and reports in the last years and months. We now have Search Console Insights beta for some sites to test before it fully rolls out.

How does the Google Search Console support SEO?

Your Google Search Console (previously called Google Webmaster Tools) is a vital source to help you know how GoogleBot sees your site. It makes it easy to find andfix technical errors and then resubmit those pages to be recrawled. It offers ways to test and submit sitemaps, see backlinks, and know where you stand with rich featured snippets in Google SERPs.

There are very compelling reasons why webmasters should use the Google Search Console as a tool to build visibility as Google Search incorporates more machine learning. By syncing many Google platforms, it allows the online digital marketer’s life to be lived with a higher degree of confidence and planning to take place.

All of the tips we will cover here assume that you have your site and it’s a version already verified. There are many great tutorials on how to accomplish that; now that you are ready to dive into its many useful tools, I will cover my favorites.

Does Google Search Console show organic or paid traffic?

The Google Search Console shows organic data for all data sets, except for impressions, it includes data from Google Ads. The Search Console data covers much more reporting for organic search results. This includes: Search Appearance, Total Clicks, Total Impressions, Average CTR and Average Position. They can also be filtered by devices, dates, queries, pages, countries, rich results, and Web Light results.

Additionally, new Google Search Console Reports added in 2020 and 2021 help owners and search marketers can additional data insights.

Does Google provide domain-wide data?

Yes. The domain-wide aspect was announced February 27, 2019. Google recommends that businesses to verifying all versions of their website for a better overall view. That includes HTTP, HTTPS, www, and non-www site versions. This improves on the many separate listings that we previously needed to understand the full picture of how Google assesses its domain as a whole. Now webmasters can quickly verify and view the data from Google Search for a whole domain.

For many who at least used the GSC for something, typically this was one of the first reports pulled. There are new ways to gather the same information. So, it is no great loss that Google has dropped the Content Keywords feature from Google Search Console

Why is my data missing in Google search console?

If you have only just set up your site in the Google Search Console, give it time. The most common reason why all or some reports are missing from the new search console is that Google simply hasn’t migrated them yet. Also, sometime it stalls for a week or so – or has a bug. We can take a look at it for you.

Many Minneapolis SEO experts, and SEM consultants, and agencies take the time to understand and follow Google’s SEO Guidelines. It is important for you to know who you can trust before enlisting their search engine optimization services. Google even warns you, “Make sure to research the potential advantages as well as the damage that an irresponsible SEO can do to your site”.

Someone who understands the many in-depth ways to use the GSC reports is key to solving technical SEO eCommerce issues.

Top SEO Tasks Accomplished Via the Google Search Console

All search marketing experts have to learn to live with and respect what Google wants in order to provide results to our clients. By striving to meet exactly what Google wants, you can expect Google to eventually regard your efforts and grant more visibility in Google SERPs.

One cannot expect free organic traffic from Google unless we adhere to the technology giants’ Search Quality Rater Guidelines. It is best to honor where that free web traffic comes from and make your existing content and web pages align. In return, your site will gain more credence for being trustworthy, credible, and authoritative.

What are the top SEO Reports in the Google Search Console?

The Search Console Reports have become more valuable to digital marketers, since it permits (partial list):

- Discover opportunities to improve the performance or ranking of a particular page

- Determine which keywords and keyword phrases drive web traffic

- Auditing and fixing schema markup

- Used to illustrate how a hired consultant SEO efforts have improved rankings

- Better comprehend how searchers recognize a brand

- How web content is matched to search queries

- Unearth additional keyword favorable or advantageous opportunities

- Locate and fix broken links

- Learn of technical SEO status updates

- Track how Google views your event markup

Google Ramps up how Webmasters can use the Search Console for SEO Tasks with several new features and the promise of more to come. Two of the latest new feature from Google will help you be better apprised of the latest updates in search. Let’s jump straight in and see “What’s New in Google Search Tools” for May 2016. Just today Google has announced that by using property sets in Google Webmaster Tools, it is easy to tie tracking for your mobile app, mobile site, and the desktop site together.

During a recent Google Webmasters hangout on the topic of the Search Console and mobile-first search indexing, John Mueller, Google Webmaster Trends analyst revealed an update to the amount of search data that Google plans to make available to marketers in the Search Console. If indeed we gain a full year’s worth of data, it will benefit SEOs immensely.

“If you have a year’s worth of data, then the UI needs to probably be slightly different than what we have now,” Mueller stated. This is useful for letting you know how your site’s data lines up.

“It’s important to note that the information you include (or don’t include) in your metadata can encourage or discourage people from clicking through to your website from the SERPs. Optimized metadata is when the meta title, meta description, and schema are all technically accurate for SEO purposes.” – Linkio’s Complete Introduction to SEO Optimization Guide

Google Performance Tab

Google Discover and Google News reports

The Performance tab helps you know which pages and keywords your website ranks for in Google Search. Additional reports reveal your content’s performance in Google Discover and on Google News. However, not all sties are eligible for those reports. Formerly, we only data as far back as 90 days, but now, you can research your data for up to 16 months.

By checking the performance tab weekly, you learn what keywords or pages need fixing or better optimization.

Web Light Results & Event listing tracking

Business owners now benefit from a new report in their Google Search Console reports. Digital marketing managers can track the performance of their event listings in Google Search.

This underscores how much search engines are currently relying on schema.org structured data implementation to recognize events and other rich card formats. The Google report is found by using new filters found under ‘Search appearance.’

In order for Search Console to track this data, SEOs need to use and maintain current event structured data markup on pages that include event details. Google posted a document detailing the difference between ‘event listings’ and ‘event details’*.

Filter your data by selecting event listings and event details.

As well, you can easily check any page on your website and see how Googlebot fetches it immediately, Search Analytics shows you which keywords we’ve shown your site in search for, and Google informs you of many kinds of hacks automatically. Additionally, users were often confused about the keywords listed in content keywords. We use it to identify possible issues to resolve with schema product markup. And so, the time has come to retire the Content Keywords feature in Search Console,” states Google.

Shopping Tab Listings Report

This newer addition is in the Shopping section. You may see “Your Shopping tab listings are managed in Merchant Center”. Or the message “This property doesn’t have products eligible for the Shopping tab”. This means that you are missing out of key eCommerce data insights.

Consistently check how Google sees your products and if they get proper rich results. This eCommerce Schema Markup Guide will show you how to see if they are valid or if missing fields should be filled in to make your product snippets more prominent.

Click on a product to see which fields are missing for particular products and if these are essential parts or nice-to-haves. If you’ve added these to the structured data of your products, you validate the fix in Search Console.

The Shopping section also houses your Google Merchant listings. You can enable your shopping tab listings to display your products on the Shopping tab in Google Search. Google is providing eCommerce site owners with a lot of reports to help sell your items faster.

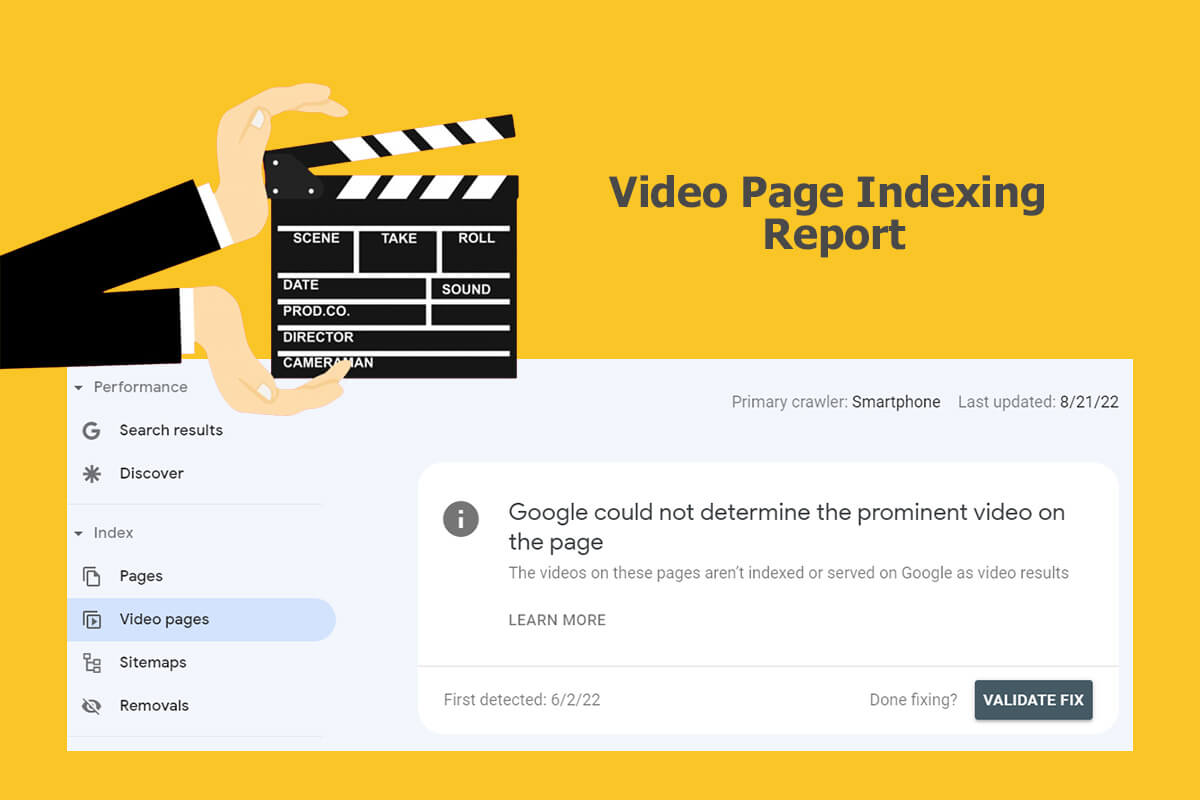

Google Search Console Video Index Report

Originally, this viceo indexing data was announce July 11, 2022

Video creation and consumption on the web continues to be favored by users. In line with that, the Google Search Console has added a report to help site owners understand the performance of your videos on Google. It is useful to identify your video content that can benefit from improvement. Google tells us this new report “shows the status of video indexing on your site”.

As of April 2023, Google added a report showing inline data URLs that are used as video URLs as “Invalid video URL”. This is found in your video indexing report.

The Google Search Console Video Page Indexing Report answers the following questions:

- On how many pages has Google identified a video?

- Which videos were indexed successfully?

- What are the issues preventing videos from being indexed?

Give the Search Console time to update and remove errors.

If you are using the WooCommerce plugin, you’ll need to test code. We have used this with multiple sites and have repeated success. Currently, it is necessary to remove the markup that WooCommerce places in the category pages and chop by hand or in the functions.php file. Experience tells us that eCommerce sites and functions can be very finicky. It is best to have someone who really understands code to make changes to the function.

Blend your Search Console Data into Google Looker Studio to discover new ways to improve your question answer content. It also lets’ you review your FAQ schema markup.

By comparing similar date ranges and using filters, you can research organic traffic drops using your Search Console custom reports.

GSC Updates “Submit To Index” To “Request Indexing”

The wording is now “requesting indexing” which changes to “indexing requested” once clicked on and confirmed that you are not a robot. Formerly it was “submit to index”. This may reflect Google’s move to a more still posture about a site’s overall health status. What this means is that by using the feature, your site may or may not find that Google indexes or ranks the page immediately or at all. You need to have your site configured technically correct and adhere to Google’s Guidelines.

Google states, “Note: The page will be considered for indexing only if it meets our quality guidelines and avoids the use of noindex directives.”

1. Select your option between Fetch or Fetch and Render:

• Fetch: Fetches an identified URL in your site and displays the HTTP response. It does not request or run any associated resources on the page. This is accomplished quickly when needing to debug suspected network connectivity, security problems onsite and learn the success or failure of the checking in to see how Google sees your site.

• Fetch and render: Fetches your stated URL from your site, and displays the HTTP response while additionally rendering the webpage according to a selected platform (desktop or smartphone). This operation requests and runs all resources on the page (such as images and scripts). This is ideal for determining what the visual difference is between how GoogleBot views this page versus how a site visitor finds your page.

2. The request will be added to the fetch history table, with a “pending” status.

When the request is complete, the row will show the triumph or lack of it for your request as well as basic information. Click any non-failed fetch row in the table to benefit from further details about the inquiry, including raw HTTP response headers and data, and (for Fetch and Render) a list of blocked resources and a view of the rendered page.

3. Optioned to have the page recrawled.

If the request is accomplished and is under four hours old, it is possible to request that Google crawl and possibly re-index the fetched page, and optionally any pages that the fetched page links to.

Search Console Property Sets

How to Set-up and use Google Search Console Property Sets:

1. Establish a property set

2. Click to select the properties you’re want to add

3. Give it a few days for the data to be collected and populate

4. Benefit from the new insights in your Google Search Analytics!

Property Sets will handle the sum of the URIs from the properties included as a solo existence among your Search Analytics features. “This means that Search Analytics metrics aggregated by the host will be aggregated across all properties included in the set”, states Google. This feature accommodates all property types. Businesses can now benefit from a high-level snapshot of your international sites, combinations of both HTTP / HTTPS websites, of different segments or brands that host individual websites, or monitor the Search Analytics of all your apps under one umbrella: you gain all of that with this one new feature.

As marketing grows more agile, the huge need for visibility in changing search is more intense. New console reports make managing tasks easier, time and efficiency can be gained with this new feature. The reality is we all face time pressures when handling mobile marketing on top of our more traditional channels. Previously, these reports and statistics needed to be accessed separately. Now marketers have fewer places to log into to get this work done. The Search Console has announced the idea of “property sets,” which is a handy way to integrate multiple properties (for both mobile apps and websites) into one place. The SEO tasks of tracking overall viewer clicks and SERP impressions are abridged with access to a single report.

The Search Console HTTPS Report

John Mueller, John Mueller, Google Webmaster Trends Analyst, keeps us updated of Search Console reports updates and ways to calculate site clicks and glean value in Search Insights.

On September 14, 2022, a report regarding the HTTPS status of sites was added to follow the Google Page Experience report added in 2021. It’s purpose is to help site owners understand and solve issues affecting how Google assesses their site. This helps to identify which pages are not served over HTTPS, and ways to improve.

We’ve quickly adopted the use of this HTTPS report. Knowing how a client’s HTTPS pages are served on Google Search is better understood through the list of sample URLs.

John Mueller also states, “you may see a change in the click, impression, and CTR values shown there. For most sites, this change will be minimal. A significant part of this change will affect website properties with associated mobile app properties. Specifically, it involves accounting for clicks and impressions only to the associated application property rather than to the website. Other changes include how we count links shown in the Knowledge Panel, in various Rich Snippets, and in the local results in Search (which are now all counted as URL impressions).”

Google Search Console’s Top SEO Tools

1. Google Structured Data Markup Helper

2. Google Data Highlighter

3. List of Google Site Links

4. Number of Pages Indexed

5. Mobile-Friendly Test Tool

6. Search Terms Report

7. Google Main Message Center

8. Fetch as Google Tool

9. Disavow Tool

10. Test and Submit a Sitemap

11. Search Analytics Tool

12. Crawl Errors Tool

Many of the above improve chances to manipulate how your Google Knowledge Graph populates. Google’s RankBrain Artificial Intelligence powers its Knowledge Graph search results, which includes semantics and context to embellish keyword-based search.

Additionally, the Knowledge Graph Search API assists webmasters and SEM experts to find entities in the Google Knowledge Graph. The API uses standard schema.org types and conforms with the JSON-LD e-Commerce specification that Google often prefers. Call and request additional information if needed: 651-206-2410.

Now for a more in-depth look at each of the 12 Tools that help optimize a website.

1. Google Structured Data Markup Helper

Supplementary information that you offer Google through structured data markup to cognize your site’s content, the more precisely they can match your content to search queries. To help businesses accomplish this task, Google created a tool that makes it simpler to embed structured data markup directly into your web pages. Why go through the time to do this? By correctly embedding structured data markup, your site’s structured evergreen content exists to help all search engines, not just Google alone.

Becoming more familiar with using Google’s Structured Data Markup Helper makes available the processing of tagging the key properties of the relevant data type by using your mouse. Select your product brand, color, size, or model number, and the new tool generates an example of HTML code with microdata markup included for you. By simply downloading it, use it as your guide when implementing structured data on your website.

When facing time constraints, this tool is much faster than checking each page individually for schema markup! Webmasters can start out with a high-level summary of how Google sees your site’s structured data and drill down for more information on a page level.

2. Google Data Highlighter

The Structured Data Markup Helper offers more than Google’s Data Highlighter, released in December 2016. The easy of use Data Highlighter quickly permits webmasters to inform Google of the pattern of structured data on their site without having to edit their HTML. Be aware that this method of markup is useful only to Google.

Examples of using the Data Highlighter:

• Tag data about a movie: include its title, the name of the director, the number of reviews, and the quality of viewer ratings.

• Tag data for multiple events on your calendar: include the date, name of the event, and the location it is held at.

The Data Highlighter makes it easy to markup 3rd party general resource materials that support the context of your page’s topic. Schema.org offers snippets of code examples for additional fields and options, for SEM experts seeking a more comprehensive implementation of structured data.

3. List of Google Site Links

Google lists the number of backlinks it recognizes that point to your website. While it punishes link schemes and low-quality links, the Google Search Console isn’t the easiest tool for webmasters to use for SEO clean-up working on your site’s linking structure. Be sure to stay current on what constitutes a violation by reading Google’s Webmaster Guidelines.

Take the list of backlinks provided in your Search Console and use other tools to decipher if this hurts or helps your site. If your site has been penalized due to a link scheme, it may be hurting your digital rankings. By cleaning up your backlink profile, you can increase your domain authority and improve your chances of showing up in SERPs.

Other tools like Link Detox can support the process. Link Detox and Ahref’s do a good job of finding risky links; the former automatically creates a disavow file, which begins can the restorative process of earning back your rankings.

4. Number of Pages Indexed

Every business needs a healthy amount of search engine-accessible text on their site to be considered of value to visitors. Use the Google Search Console to follow the number of pages that it says it indexes. Note the pages that it misses, determine a better way to optimize, and make improvements to increase your visibility in search results.

Too few pages indexed could be due to some of them being mistakenly blocked by your robots.txt file. Or they could be temporarily unavailable to GoogleBot. Once your site is crawled, the Google Search Console will provide you with the pages that are indexed; it takes some further work to find which ones are not indexed. NOTE: The console also offers a robots.txt testing tool.

5. Mobile-Friendly Test Tool

Google states: “Websites with mobile usability issues will be demoted in mobile search results.”

The Google Search Console offers essential tools to assess your site mobile-friendliness for standard web pages. Since many buyers rely on mobile devices, this helps to improve your business’s Google shopping results on mobile. Additionally, check your site’s mobile-friendliness by usingBrowserStack to see how it displays on multiple browsers. Google’s new algorithm could drastically influence your website’s traffic levels if it’s not mobile-friendly yet.

Common mobile usability error messages:

• Touch elements are too close together: On smaller screens, touch elements, such as menus, call-to-action-buttons, and navigational links, that are located close to each other may challenge a mobile user. Think of a construction worker with fingers beefed up with muscle so much so that they cannot easily tap a desired element with their fingertips without bumping a neighboring element as well.

• Unreadable font size: You can learn which web pages contain text where the font size for the page is too small to be legible for most mobile visitors and challenging to “pinch to zoom” in order to consume.

• Interstitial usage: Some companies have started to advertise their mobile apps by opening a web page before or after an expected content page when users browse from a mobile device. Google considers that as offering a poor user experience; screen space is simply too much at a premium. An interstitial typically obscures the page text and content. It is very annoying when it also difficult to get rid of them.

• A Mobile Viewport is not configured: If you feel daunted by the many devices that visitors to your site can use, it is only on the increase. This means that you must prepare your website to display correctly on varying screen sizes. All web pages should specify a viewport using by using the meta viewport tag to instruct browsers how to adjust the page’s dimension and scale to fit the device.

• Fixed-width viewport: Here it is possible to learn the pages with a viewport given a fixed width. Some web designers set the viewport to a specified pixel size for the purpose of adjusting a non-responsive page to accommodate typical mobile screen sizes.

• Web content isn’t sized to viewport: Some pages may necessitate horizontal scrolling in order to see the content. This occurs if pages have absolute values declared in CSS files, or use images intended for optimal viewing at a specific browser width (such as 1020px).

• Outdated use of Flash: It started when the iPhone refused it; today almost all mobile browsers refuse to render Flash-based content. Therefore, someone searching from a mobile device will not be able to use a page that relies on Flash for managing its content, animations, or navigation.

6. Search Terms Report

You can learn what Google considers as your important content that they use to match search queries to your site.

Google took an action that hundreds of leading SEO’s and digital marketers dreaded happening: the encryption of all earned search traffic. The percentage of Not-Provided data in Google Analytics had already been steadily increasing, but now its levels make it near impossible to see much of the original SEO reporting in analytics as to which keywords from Google should be credited with site visits and conversions.

The official reason we no longer get Google Analytics keyword reports, that many relied on in, is the encryption of all organic keyword-level data due to privacy concerns. Some speculate this change was especially those triggered by the NSA furor that took place. This caused an increase in Google Analytics not-set keyword data.

Many are looking to learn whether or Google Analytics Premium will start to provide keyword-level data as the 360 version rolls out. Our premise is that Google is encouraging businesses to use Google AdWords more; this offers richer reports in Analytics and within AdWords that are helpful to test keywords and monitor data trends. Although organic keyword data is encrypted, Google is more generous with providing paid keyword data. It makes sense that those who pay for services gain additional benefits over businesses that only utilize the free tools.

7. Google Main Message Center

This is a key area to watch for critical messages, like a possible Google penalty.

My understanding is that a Google penalty shouldn’t last forever. Once the work is done to correct whatever lead to the penalty, it’s cleared, and you have reason to believe that your site will recover over time. However, the process of deleting the penalty involved site changes, and because they were necessary, a loss is that you no longer benefit from previous ranking successes before the penalty occurred. Be prepared to need to remove blog comment spam, paid-for backlinks, inclusions in useless article directories, negative SEO backlinks from competitors, inbound links from low-quality and unrelated websites, over-done interlinking between owned sites, and more.

Common message types found in the Google Search Console

1. Increase in 404 errors

2. New site owner

3. New preferred domain change

4. New geotarget

5. Site infected with Malware

6. Problems with crawling your site

7. How to monitor search traffic

8. How to improve search engine optimization

8. Fetch as Google Tool

This tool works as an immediate test to determine if Google can crawl a specific web page.

The Fetch as Google tool enables you to test how Google crawls or renders a URL on your site. You can use Fetch as Google to see whether Googlebot can access a page on your site, how it renders the page, and whether any page resources (such as images or scripts) are blocked to Googlebot. It also will inform you of how Google sees your schema markup, like if your Fact Check schema is correct, or any other schema type. This tool simulates a crawl and renders execution as done in Google’s normal crawling and rendering process, and is useful for debugging crawl issues on your site.

As the BERT algorithm is improved on, correctness matter more for establishing domain authority.

Here are 2 options when running a fetch:

1. Fetch: Fetches a one site URL and displays the HTTP response. Other pages associated resources or files such as images or scripts are not a part of the run. This shortens the operation runtime when debugging potential network connectivity or security problems. You simply get a success or failure response to the request.

2. Fetch and render: Fetches a specified URL in your site, displays the HTTP response and also renders the page according to a specified platform (desktop or smartphone). This operation requests and runs all resources on the page (including images, PDFs, other support files, and scripts). This is useful to test how Googlebot sees your page, what you thought it would look like, and how a user sees it.

How do I test a schema using the Google Search Console?

The Fetch and Render tool is now the best way to get new content indexed and test live results. It will list errors found for FAQ, HowTo, Product and Review markup, and more. It will show you, for example, if you are missing a required element of aggregated review schema. If you’ve been frustrated in the past with errors and warnings that are hard to understand, new updates to the console include errors being explained by Google. Each error and warning has a Learn More button that clicks through to the exact same page. Submit requests of the Google Search forum if you have ideas to improve it user-friendliness.

Search Console product schema errors often include either “offers”, “review”, or “aggregateRating”. We’ve spent a lot of time resolving issues and are glad to help if you find this taxing. As more structure data fields are required, expect to see a new range of warnings, including Missing field “brand”, Missing field “SKU”, Missing Field “offers”, and more are sure to come. Product schema help power the visually-rich mobile product carousels.

You can further test all non-failed fetch row in the table to get additional details, this encompasses raw HTTP response headers and site data, and (for Fetch and Render) an outlay of blocked resources and a list view of the pages rendered.

9. Disavow Tool

Regrettably, hackers exist and are very active. It is possible for a hacker to generate dozens of extraneous pages on a site and then pound them with spammy backlinks in PBNS or other places. They may even be auto-generated daily on many sites and cause a real headache. If you suspect this is the case, you will need to conduct a full backlink audit to know how to label each backlink (good, fair, or damaging), send “please remove” requests, document your work, and then if needed, use the Google disavow tool.

It is easy to overdo it with the Google Disavow Tool. Glenn Gabe made a parallel to being like a “machete turning into a guillotine when disavowing links”; it is important to know the impact of each individual inbound link before taking dramatic actions. Added to that, we now hear from John Mueller that SEOs have “No Need to Disavow Links That Are No Longer Relevant to Your Site”. It is best to learn how to recognize which inbound links are truly problematic before taking the route of disavowing. Over time, these incoming links may have lost relevancy – which is a good thing.

At this time, if you do use the Disavow Tool, don’t expect a follow-up from Google with any form of acknowledgment message to say that they have your file or have actioned it. Take time to take all the steps correctly if you are uploading a list of links to disavow; stay alerted to possible changes. If a need comes up in the future to add to the file submitted to Google, you can download it, append more lines and upload it again, which will overwrite the original file. If you or your team lacks someone with experience using this tool in your Search Console, let us know that you desire professional help and we will be glad to assist.

Bruce Clay warns that, “Penguin’s side effect for site owners has been harsh: Links from external sites can and do hurt your site — even if you did nothing to create those links. Too many spammy or unnatural-looking links aimed at your site can torpedo your site in the rankings. In the age of Penguin penalties, SEO-minded webmasters have to be vigilant about their sites’ link profiles.”

On the other hand, if your site’s URLs have been disavowed by someone else, while this factor alone should not harm your domain, getting to know the data behind may offer some reason. If multiple sites list you in their disavow files, pause and examine your website to identify what possibly prompted the inclusion in third-party disavow files.

10. Test and Submit a Sitemap

Most sites have a sitemap file, although it is not mandatory. This is easily managed by the Yoast SEO plugin for a WordPress site. Or you can generate it yourself to offer a list of the web pages you want to tell Google and other search engines about. The Googlebot web crawler scans it to crawl your site with better intelligence. Additionally, your sitemap may offer useful metadata associated with the pages listed: Metadata is information about a webpage and typically includes when the page was last updated, how often the page is updated, and the importance of the page compared to other URLs website.

11. Search Analytics Tool

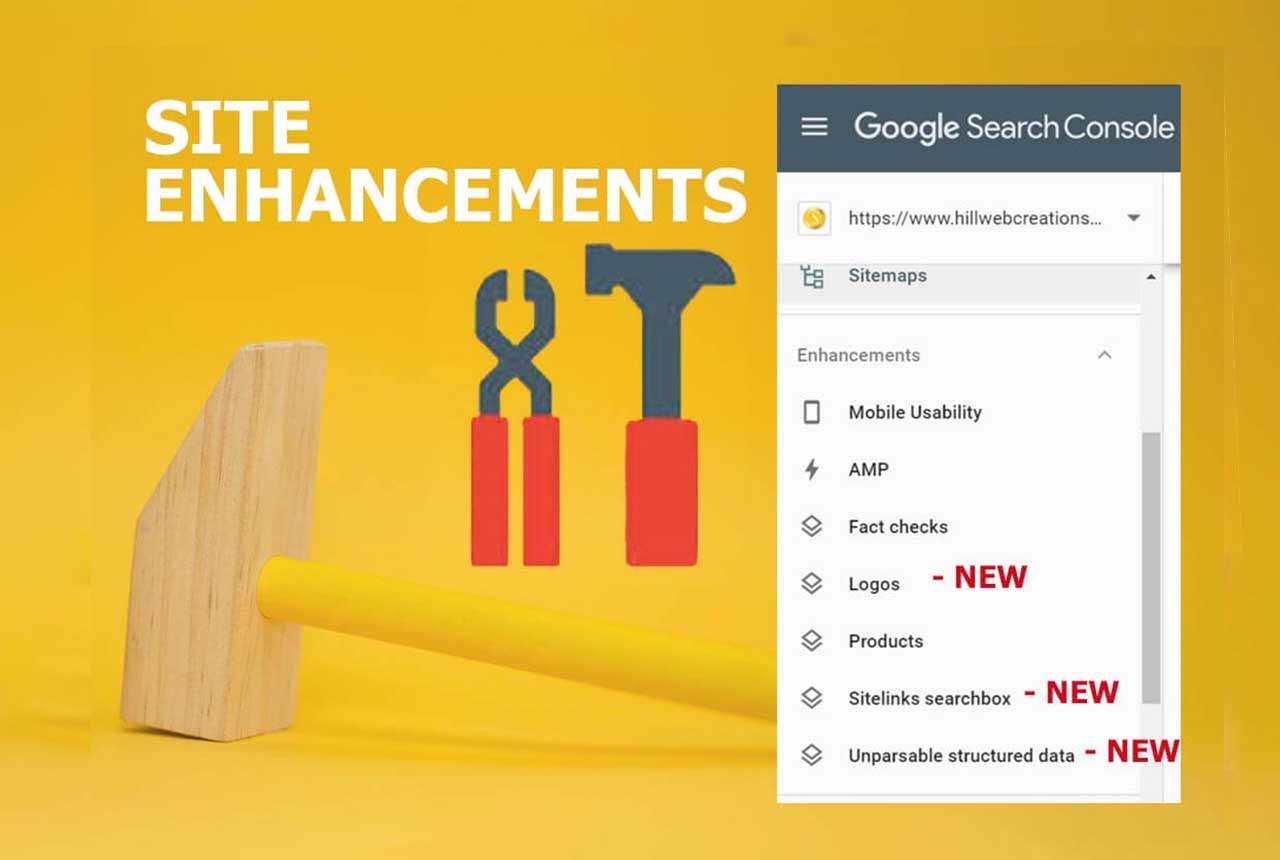

Three excellent new site enhancement reports added:

- Unparsable structured data

- logo

- Sitelinks Searchbox

These are available under the enhancements section in Search Console – two that only show up if you use the Logo markup, and Sitelinks Searchbox. Google informed webmasters that they can review the trends of errors, warnings and valid items: “To view each status issue separately, click the colored boxes above the bar chart. You can review warnings and errors per page and to see examples of pages which are currently affected by the issues, click a specific row below the bar chart.”

Use them to inform your SEO search strategy as to how to improve your content to meet user’s needs. They also help fill in gaps in Google Analytics Not-Set data.

Other key site optimization tasks you can improve on:

* Determine your site’s top pages (under impressions)

* Determine your site’s top queries (under impressions)

* Find your site’s Click-through rate (under CTR) per queries, search type, country, device, and date

* Comparing similar pages position rankings (under position)

* Which day of the week you typically have higher click activity (under clicks)

* Check on how your video and image files are positioned (under Web, then click on comparisons)

* Landing page analysis – How well your landing pages serve users’ needs is a solid signal for analyzing organic search traffic since each landing page is generally created around a focus keyword phrase, product, or informational topic. As a result, incoming keyword searches often reflect the main focus of the webpage. You can see which organic searches on Google lead to which landing pages on your website. All top menu options in Search Analytics have the “Pages” radio button.

Google recently updated and merged the best of both worlds in the Search Console and Analytics to really help SEO and SEM experts gauge overall SEO website health.

3 Steps to View Query, Landing page and Position Collectively in Your GSC

a. Log into your search analytics and check all four: clicks, impressions, CTR and position.

b. Select your ”Pages” option, and next choose the page you want to learn more about.

c. Once you have clicked on that page, now change the radio button over to “Queries” and notice that the list below updates with information about the entire queries individual search users have typed in that led them to that particular page.

On top of that, you gain specific as to the number of clicks, impressions, CTR percentage, and position in search engine result pages. This helps you create more consumer-centric content, as you see what users take action on. It’s a good idea to learn all you can about how your site is performing in search results so you can make important business decisions about your site. Your chances for more business growth depend on it. There are many more reports you can run from your search analytics.

12. Crawl Errors Tool Finds Broken Links

John Mueller said in the Jan 11, 2019, Google Webmaster Forum that the crawl errors section will be going away:

On the Search Console side, there are lots of changes happening there of course. They’ve been working on the new version.

I imagine some of the features in the old versions will be closed down as well over time. And some of those might be migrating to the new Search Console. The intent is to help search marketers resolve issues faster that hinder Google indexing web pages.

I’m sure there’ll be some sections of Search Console also that will just be closed down without an immediate replacement. Primarily because we’ve seen there’s a lot of things that we have in there that aren’t really that necessary for websites. Where there are other good options out there or where maybe we’ve been showing you too much information that doesn’t really help your website.

So an example of what could be the crawl errors section where we list all of them and millions of crawl errors we found on your website. When actually it makes more sense to focus on the issues that are really affecting your website rather than just all of the random URLs that we found on these things.

Currently, there are no plans to replaced this report in the new console.

For the record, it was located just under the Crawl tab is “Crawl Errors”. It has been handy for checking both for desktop and for smartphone 404 errors without the need to read log files. They add an elephant-sized hurt to a site’s trust factor and SEO health. Check for and fix all “server errors,” “soft 404s,” “not found” and “other.” Site tags and categories can be an issue. As well, they cause unwanted duplicate content problems. Your webmaster should know how to correct them.

Find the “Not found” tab, and then click on each link listed below for more details. It will open up a box that offers a valuable “Linked from” tab. Here you will be given the exact URL of the page that has the problem link on it. Once you have gone into that page, corrected or removed the link, you can mark it as fixed.

When your website is frequently crawled, that’s a good sign that you have healthy SEO in place. Many reasons could be behind crawl rate declines. Broken HTML code, unsupported web content on your site, if Googlebot is instructed not to parse a page’s components, or a page when it is comprised only of images.

Additionally the Crawl Stats report lets you know how often your pages are crawled. If they have dropped and fail to return to previous levels, it is a clue to conduct a full SEO audit to determine issues with your site. This report also lets you see the amount of time spent downloading a page, given in milliseconds. You can follow improvements over time that reflects your page speed load optimization efforts.

Webmasters can now mute email notifications.

Once Google indexes a site’s 301 redirects, it is safe to remove them. To cover new ones try leaving your 301 redirects in place for at least up to one year.

Merge your Search Console with AdWords, Analytics, and Looker Studio

Your company can better understand how paid text ads and earned search results work collectively to help people searching online find you. By relying on multiple platforms, you gain a holistic view of how your online brand is doing overall when it comes to attracting views, clicks, and followers.

It helps to know where your paid & organic reports are located, why these insights matter, how they complement each other, and what you need to do to merge your Google accounts. It is a requirement that you have a Search Console account for your website and then can link that Search Console account to your AdWords account and Analytics account for both your paid and organic reports.

Bear in mind that your Google Search Console is a toolbox. It doesn’t do accomplish anything on its own without your help. Like all tools, there is a learning curve. It is a gold mine chocked full of data that is useful for making website changes to keep improving your website’s rankings and revenue. You can decide between either tackling how to use it or find someone who already knows how.

Search Console Voice Search Report: Future Features

Mueller also talked in the same hangout about advancing efforts to use the Google Search Console to help webmaster better know how site visitors are finding their web pages through voice search.

It would be handy to know what segment of those who search for your site with a hands-on-keyboard approach versus conducting a voice search. He stated that Google wants to “make it easier to pull out what people have used to search for voice and what people are using by typing. Similar to how we have desktop and mobile set up separately”.

One challenging aspect many of voice search is they user favor longer-form sentences. By default, Google Search Analytics may then not be cognizant of a significant number for that query and simply add it to the lower-volume keywords and thus skip revealing it in the report. Google experts are dialoguing among themselves what may be a way to go about singling out voice searches for such a report in the future.

The rapidly expanding use of voice search and direct answers have ramifications for website owners and SEO practices, beginning with the demand to add more structured data to site content and furnish clear and concise answers to specific questions upfront in online content. Reports currently work great to determine which local keywords are pulling traffic.

John Mueller extends an invitation for input, “If you have any explicit examples of specifically how you think this type of feature, or any other feature in search console, could make it easier to really make high-quality websites, to really get some value out of search console in a way that makes sense for you to improve your service for users, then we’d really love to see those examples.”

Our search marketing team of experts specializes in WordPress and manages a number of successful WooCommerce website like yours. While this article focuses on how a Google search results page shows up in organic search results, often AdWords PPC advertisements, such as “Google Ads” or “Sponsored Ads” are necessary as well to gain the business revenue you are seeking.

“If you manage a website for users speaking a different language, you need to make sure that search results display the correct version of your pages. To do this, insert hreflang tags in your site’s HTML, as this is what Google uses to match a user’s language preference to the right version of your pages. Or alternatively, you can use sitemaps to submit language and regional alternatives for your pages.” – Christopher Ratcliff

“Google explained that in 2015 they saw a 180-percent increase in websites being hacked compared to 2014 and also saw “an increase in the number of sites with thin, low-quality content. They also sent out more than 4.3 million messages to webmasters to notify them of manual actions on their sites. With that, they saw a 33-percent increase in the number of sites that went through the reconsideration process.” – Barry Schwartz, Search Engine Land’s News Editor

“Google Analytics is more about who is visiting your site—how many visitors you’re getting, how they’re getting to your site, how much time they’re spending on your site, and where your visitors are coming from. Google Search Console, in contrast, is geared more toward more internal information—who is linking to you, if there is malware or other problems on your site, and which keyword queries your site is appearing for in search results. Analytics and Search Console also do not treat some information in the exact same ways, so even if you think you’re looking at the same report, you might not be getting the exact same information in both places.” Angela Petteys on Moz**

As a wrap up, this comes directly from Google.

Google’s Beginner Search Console Instructions: follow these steps:

- Verify site ownership. Get access to all of the information Search Console makes available. Learn more about how to verify your site ownership.

- Make sure Google can find and read your pages. The Index coverage report gives you an overview of all the pages Google indexed or tried to index in your website. Review the list available and try to fix page errors and warnings.

- Review mobile usability errors Google found on your site. The Mobile usability report shows issues that might affect your users experience while browsing your site on a mobile device.

- Consider submitting a sitemap to Search Console. Pages from your site can be discovered by Google without this step. However, submitting a sitemap via Search Console might speed up your site’s discovery. If you decide to submit it through the tool, you’ll be able to monitor information related to it. Learn more about the Sitemaps report.

- Monitor your site’s performance. The Search performance report shows how much traffic you’re getting from Google Search, including breakdowns by queries, pages, and countries. For each of those breakdowns, you can see trends for impressions, clicks, and other metrics.

If you’re on the brink of hiring either in internal or external SEO, the earlier, the better. The ideal time to enlist help is when your company first considers a site re-design or plans to launch a new business website. When a site is designed to be search engine-friendly from the bottom up, you typically save valued money and time. However, a good SEO can do a ton to improve an existing site.

Their are many important types of web site audits – some are more important than others. Just remember that someone who really knows how to clean deep insights from the search console will put your business ahead in organic Google search result pages.

Conclusion

Now that you have a better grasp of how to SEOs use the Search Console, call 651-206-2410 to gain the benefit of a proven SEO project manager to either consult your existing staff or oversee the entire project.

Consider our Minneapolis Digital Marketing SEO Services

* https://support.google.com/webmasters/answer/7042828

** https://moz.com/blog/a-beginners-guide-to-the-google-search-console