Indexing your Website Faster with the Google Index Coverage Fixing Report

Updated 2.6.2024

With Google improving our ability to use live testing for Technical SEO fixes, and its shortened wait time for re-crawling web pages submitted to be re-indexed, you can respond to user preferences faster.

All four leading search engines, Google, Bing, Yahoo, and Yandex, are passionately dedicated to understanding what people who search on the web want. That demands a deeper process than ever before to instantly surface better results to end users by understanding their intent as much as what they type in a search box or say. But, for example, if your web page that provides the perfect solution has implemented structured data wrong, it may not even be indexed by Google the way you want it to be. An audit focused on testing your site’s structured data will also help keep our pages indexed.

Let’s deep dive into getting your questions answered.

What is the Google Index Coverage Fixing Report?

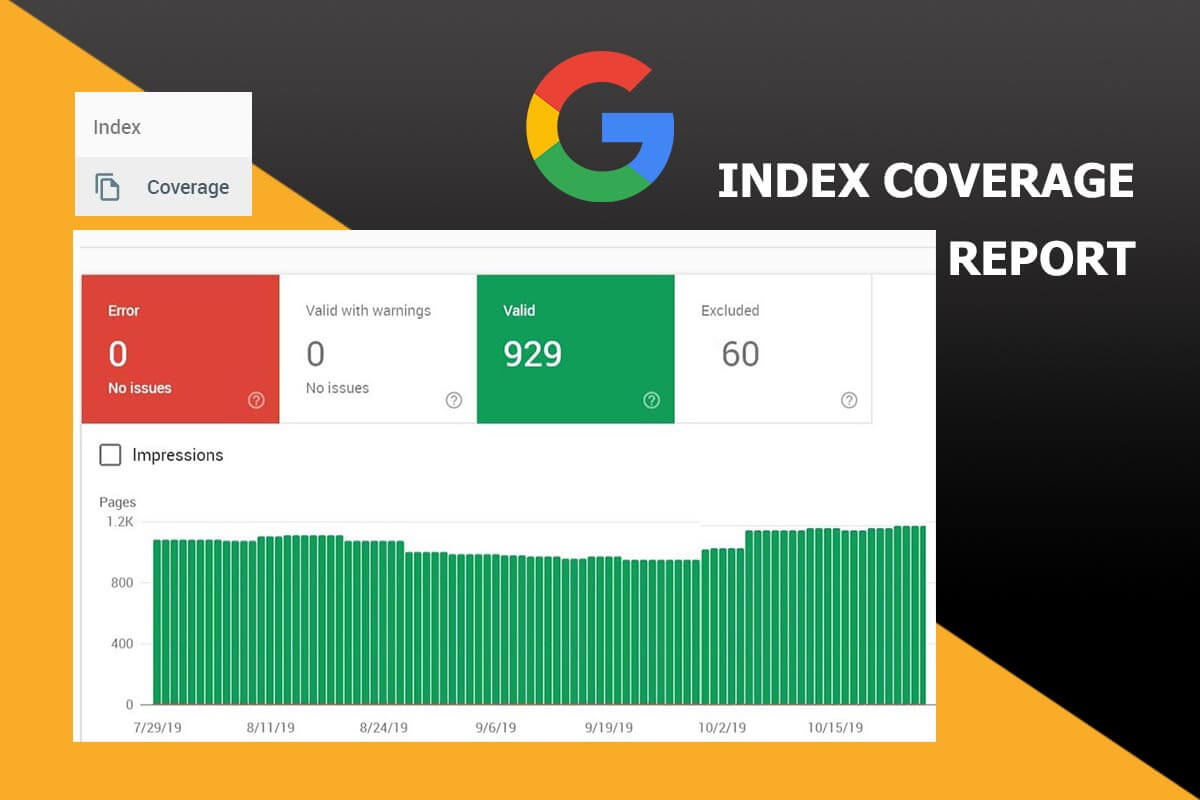

The Google Index Coverage Fixing Report details the indexing status of all URLs that GoogleBot visited, or attempted to visit, in a specified Google Seach Console property. The summary page shows the results for all URLs for each property. They are well grouped by error, warning, or valid status. It also provides the reason for that status, which is especially helpful for not found (404) errors.

Today the Google Index Coverage Fixing Report is one more of the Google Search Console’s experimental features, promised to be showing up soon to a select group of beta users.

It helps to first understand what SEO actually is.

How do I know if my mobile site is in Google’s index?

You can inspect a live URL in your Search Console. Test whether an AMP page on your site is able to be indexed by doing the following:

- Navigate to the corresponding property in your GSC.

- Open up the Google URL Inspection tool.

- Cut and paste in a specific URL’s current index status.

- Look to see if it says “Coverage – Submitted and indexed”.

- Below that check if it says “Linked AMP version is valid”.

- You can both “TEST LIVE URL” and “REQUEST INDEXING”.

- You can also use Google’s AMP page validation tool

Whether you are a business that serves a B2B audience or B2C clients, managing how your pages get indexed and crawled in a matter on surving online.

How to Conduct an Index Coverage Analysis

When conducting an index coverage analysis for larger websites (100K+ pages), filtering by sitemap speeds up the process. If the site has all of its XML sitemaps submitted individually in Google Search Console, you can view data for the total number of pages found.

We like to segment by individual sitemaps. This lets us sort the indexed from the not indexed pages and gain information about why. Sitemaps are helpful depending on how efficiently a site’s content is organized. Structured content with ontology grouping as sections, or by sub-folder, and content type makes this analysis easier. It helps us determine just how many pages from different content segments are correctly indexed by Google.

This process also assists Google Entity Search, since its structure helps define entity relationships.

Select ‘Pages,’ and then use the drop-down at the top to select the sitemap you’ve chosen. Next, you can scroll to read the overview metrics and why designated ones are excluded. The same data can be obtained by verifying sub-folders directly in Google Search Console.

Barriers Digital Marketers Face Gaining Data Insights

Leading marketers work hard to ensure that every web page is indexed by Google correctly and find success when they can best find and integrate data gleaned strategies for making improvements.

In an June 21, 2017 article titled Better Together: Why Integrating Data Strategy, Teams, and Technology Leads to Marketing Success, the need for help to understand a site’s data in the Search Console was highlighted. Casey Carey stated that “75% of marketers say the biggest barrier to using data insights is a lack of education and training on data and analytics.”

For years, search professionals have sought to gain a list from Google showing which pages are indexed and which are not. Today, with much gratitude, we are on the threshold of gaining that straight from our client’s Search Consoles.

What is Search Engine Indexing?

Search engine indexing collects, parses, and stores web data to make fast and accurate information retrieval possible. “Index design incorporates interdisciplinary concepts from linguistics, cognitive psychology, mathematics, informatics, and computer science. An alternate name for the process in the context of search engines designed to find web pages on the Internet is web indexing”, according to Wikipedia.

Index Coverage Report Shows the Count of Indexed Pages

What specific data does the Search Console Index Coverage Report provide?:

* The number of valid pages on your site that it indexes

* How many pages have errors

* How many pages have warnings

* Levels of informational data

* The number of impressions indexed pages gain.

What type of Information Data Will the Report Pull?

Google algorithms have advanced methods for transferring informational data. Schema structured data is widely adopted by the Search Giant to glean information from a site’s semantic content to better match pages to relevant user search queries. It uses data to paint a picture of what a website consists of. Rich snippets are the added visual pieces of information shown in a search result.

In May, the company unveiled Data GIF Maker, an online tool intended to simplify processes to display relative interest between two topics based on data gleaned from Google’s Internet search trends or other trusted data sources. Additionally, Google has completion in automating data-to-visual transformations. One example is Infogram, an editor that converts information from user data into publishable infographics. At this point, we have much to learn about how this will populate data and all the ways to use it for getting your site indexed better.

Because Google centers on what users want, if the report shows you that a page is valid, but it has a status of “index low interest”, you might want to consider optimizing the page better. Google continues its endeavor to find and index content even faster than it does now. Earlier, we saw that they introduced beta testing a real-time indexing API for that reason.

Google will Notify You of Recrawl Progress

Gaining these Search Console functions, may shorten some of the manual workloads for SEOs. John Mueller of Google tells us **** that it is as simple as this: “After you fix the underlying issue, click a button to verify your fix, and have Google recrawl the pages affected by that issue. Google will notify you of the progress of the recrawl, and will update the report as your fixes are validated”.

Google’s new “Index Coverage Report” function in its Search Console makes it easier to locate perplexing SEO errors on retail AMP pages. Grouped by error type, Webmasters can look deeper for a specific coding error. A button will confirm that a problem is resolved after fixing affected pages; then the URLs impacted can be re-crawled by GoogleBot and reported as repaired. Follow-up progress reports showing Big Data results of the re-exploration of such pages will hopefully make the disappearance of these errors a faster process.

SEOs can get Pages Indexed Faster to Meet User Demands

Formerly, our Google Search Consoles didn’t provide a page-by-page breakdown of a site’s crawl stats with a handy list of indexed pages. To obtain the details necessary, many search engine optimization specialists had to study the server logs, use specialized tools, and put in a ton of time studying Analytics SEO reports.

Determining a site’s crawl budget and how to increase it is an easier SEO task when we can see internal links to them, crawl stats, and errors that prevent indexation.

After arriving on an indexed web page, content must now provide users with a positive experience or they quickly leave and go to someone else for the answer. The depth of an SEO’s ability to understand user engagement directly correlates to the degree of challenge a business faces to gain visibility in the top positions of search results.

As the machines continue to train themselves to get smarter, human logic still has its role, by aggressively learning, reading indexing reports, and employing the best of strategies. There is little time to try them all before the search landscape has changed again. What SEO expert won’t love gaining tips on how to fix indexing issues straight from Google!

SEO’s who focus more on data-driven insights versus reacting to “what we think” is becoming a priority for producing results that perform and please leading marketing executives. Know where your business leads are coming from, which web pages user interacts with the most, and what solutions they are looking for that you can provide to gain a competitive edge online.

The Impact that Not Getting Pages Indexed Has on Business

You cannot build you site credibility if your web pages are not indexed.

We build business relationships differently. The internet has changed up the way we form new connections and maintain client relationships, as well as those with business partners, friends, family, famous people, and acquaintances. Now that people can interact with each other without ever meeting in person and keep each other and buyers updated on new products, inventory levels, reviews, and solutions, knowing how individuals engage online content is essential to business growth. One great example is your chance to gain significant visibility in new product carousel formats in SERPs.

Google is seeking to provide actionable insights to assist in fixing the code faster and making it easier to ensure page indexation and crawlability. This, in turn, means that users searching for related content have a better chance of consuming those indexed pages.

Google most likely takes special note of your review and product structured data implementation while indexing your web page. As with most new features, this may develop over time after gaining feedback from users. Google doesn’t recognize fixed content instantly, but this new Search Console function is meant to reduce the time you have to wait to see the page re-indexed. If schema errors have impacted the page’s chance to be indexed, it may pay attention to your markup while indexing your site next time. Remember to notify Google that your web content or coding issues have been fixed.

Webmasters should monitor their site’s indexing errors, as too many errors could potentially signal to Google that your site’s health score is low or that it is poorly managed and maintained. Often smaller businesses feel too strained to invest in ongoing site maintenance, so they simply ignore their error reports or forget to mark errors as fixed. It can be overwhelming to anyone to suddenly notice a very long list of errors. Clean your list of any indexing errors quickly and schedule in time to watch and keep it clean. Healthcare sites that provide essential patient services need to take extra steps to ensure that these pages are indexed correctly.

Internet Users are Constantly Connected for Purchasing Decisions

The rise of new devices and improved mobile communications means that the average American has in-the-moment access to the Internet. Shoppers are no longer tied to desktop computers; they can get online by talking to Google Home, Siri, or Amazon Echo anywhere, and anytime, which has created noticeable impacts a website’s performance. Whether or not your pages have clean code for better chances to be indexed, or can be pulled up on quickly via the mobile search algorithm, in voice and image search it matters more than ever before.

For instance, wearing an Apple Watch with a smartphone in one’s pocket is now quite common. It has created a need for near-constant accessible data and the right information that users want. If your web page offers the best solution but isn’t even being crawled by Google, your business may be missing out on easy sales. People live-tweet-post and share all the time. That may be what they overhear at an outing, while attending a business event or offer as a useful opinion about a product.

I recently attended a wedding where a huge beautifully embossed sign welcomed attendees to an “Unplugged Wedding”. While people may be actively discouraging social media documentation on smartphones during life celebrations like this one, businesses typically want mobile users to interact with them 24/7.

To have your web pages with errors quickly fixed for re-indexing amounts to real dollars in the bank for those selling both products and services in the digital space. But before you redo and request re-indexing, do some background marketing research to see if the page is missing any other key details.

Submitting Fixed URLs to the Google Index

Google Sitemaps has long allowed Webmasters to submit URLs to the Google index and inform Google when these pages change. Certainly, fresh indexing after fixing issues on pages with 404 errors and pages with warnings, such as broken 301 redirects, can quickly increase a website’s crawl coverage.

Webmasters should monitor their site’s indexing errors, as too many errors could potentially signal to Google that your site’s health score is low or that it is poorly managed and maintained. Monitor your mobile search results separate from desktop search results. Too many smaller businesses feel too strained to invest in ongoing site maintenance so they simply ignore their error reports or forget to mark errors as fixed. It can be overwhelming for anyone to suddenly notice a very long list of errors. Clean your list of any indexing errors quickly, resubmit each URL, and schedule in time to watch and keep this new list of indexing errors clean (as soon as it’s available to all).

Quite possibly, the Index Coverage Report will be used more by people with basic SEO skills. Individuals who have access to server log files, and who are familiar with resolving complex indexing issues, may find this more of a handy summary report.

Google Wants to Render and Index Mobile Content Better

Google Search Guide on Common Mistakes detials how support is improved for common indexing mistakes that many webmasters make when designing for mobile. It addresses blocked JavaScript, CSS, and image files that prevent optimal rendering and mobile indexing. We are instructed to “always allow Googlebot access to the JavaScript, CSS, and image files used by your website so that Googlebot can see your site like how an average user does. If your site’s robots.txt file disallows crawling of these assets, it directly harms how well our algorithms render and index your content. This can result in suboptimal rankings”.

Developers are also warned that App download interstitials may lead to mobile indexing issues. “Many webmasters promote their business’ native apps to their mobile website visitors. If not done with care, this can cause indexing issues, and disrupt the visitor’s usage of the site,” is stated.

Google also urges app developers to use App indexing to avoid common mobile indexing mistakes; “If you have an Android app, consider implementing app indexing: when indexed content from your app is relevant for a specific query, we will show an install button in the search results, so users can download it and go straight to the specific page in your app.” It also links to an article on making your mobile pages render in under one second; which may correlate mobile load speed to better indexing. Getting your pages indexed is one hurdle, but then having them so slow at loading that users don’t wait to even read them, is another problem to solve.

Since more and more search queries are occurring when someone wants an immediate answer, be sure that your web pages that can provide quick answers are indexed. Search intent influences the consumer journey at every touch point.

Fetch as GoogleBot Feature Speeds Up Page Indexing

Another way to index your website faster with Google is to use your Google’s webmaster Fetch as Google bot feature. If your website consists of high quality and has good informative content it should be help submitted pages be indexed within a few minutes to few hours maximum.

The new Index Coverage Fixing function is meant to help some websites that are missing their indexing errors catch them better. All of Google Search essentially begins with a site’s URLs. John Mueller of Google says, “we go off to the Internet to render these URLs, kind of like a browser, and the content we pick up there, we take for indexing”.

Always seek to follow Google’s General Guidelines; logically, this will help Google find, index, and rank your site faster. If you inadvertently find that your website has been removed entirely from the Google index or otherwise hurt by an algorithmic or manual spam action, take actionable steps quickly to show up in results on Google.com or on any of Google’s partner sites. Make sure that your code actually renders and supports indexing. For local foot traffic, also adding Local Business schema markup helps your business get found.

Great Content Grows your Website’s List of Indexed Pages

Organic search traffic is critical for growing your website’s list of indexed pages and business growth revenue. In our experience, it’s typically the source of over half of all site traffic, as compared to just 7% from social media. Some research studies report around 33% of an average site’s traffic can be attributed directly to organic search while others claim that is closer to 64% of your web traffic.

But these stats only reflect an average; if your site doesn’t show up in Google’s index or Google Discover at all, you can’t expect to have good visibility in SERPs. To get a new site or blog indexed more quickly, you’ll need to allocate more resources to put towards increasing your conversion rates, upping your presence on social media, and, naturally, writing and promoting great and useful content that gets indexed. To increase the value of your content production, start with a consumer behavior analysis; then check that your new content is indexed quickly and correctly.

What Benefits that SEOs Gain from the new Indexing Workflow?

* The ability to find, fix, and validate needed SEO fixes faster.

* When seeking to prevent indexation of pages identified with low or no SEO value, you can also validate in the visual report that they are not indexed. If the work has been done that identifies certain URL parameters in the Google Search Console so that Google doesn’t crawl or index the same pages with different parameters separately, it should be indicated.

* If your CSS files are causing problems that prevent a page from indexing, Google can’t see pages the way you want them too and get them indexed. The provided examples are meant to reduce diagnostic time.

* Another frequent trouble spot is if your JS isn’t crawlable. With better indexing error reporting, quick fixes will help Google index your site’s dynamically generated content.

* Submit your sitemap right there.

* Filter your Index Coverage data to quickly study individual sitemaps.

* While tasks on indexing your site should target all major search engines (Google, Bing, Yandex, Yahoo, etc), getting help from this new Search Console indexing error report will improve your chances to be better indexed across them all.

Ongoing Checking on Your Website’s Index Status

What to continually look for when diagnosing your Index Status and what to quickly fix:

• A site’s number of indexed pages should reflect consistency by steadily increasing in number. If so, it means that Google can index your site and that you are keeping your site ranking better by adding fresh indexed content. Find and resolve indexing errors when new pages fail to add to the number that get indexed.

• Note any unexpected and extreme drops in your graph of indexed pages. This new additional indexing report will help Webmasters find and fix where Google is having trouble accessing your site.

• Sudden and odd spikes in the indexed pages graph. Whether an issue with duplicate content from both www and non-www pages being indexed or the wrong canonicals indexed, or a potential hacking, this will signal the need to take action.

How the new Search Console Indexing Reports Look and Function

Web developers and SEO’s are eager to delve into these new reports and utilize them to improve web sites. Glen Gabe, President of G-Squared Interactive LLC, released several screenshots from the new Index Coverage Report in Google Search Console* (GSC) on September 7, 2017 in an LinkedIn article.

If you believe that your site has pages that are already indexed, but without being submitted to sitemaps yet, Google’s new Index Coverage report will fill in the missing gaps. No export feature seems to come with it so far. This is helpful if you want to know where you show up in People Also Ask boxes in Google SERPs.

What peaked my interested is Glenn’s comment “Indexed, but blocked by robots.txt. These URLs actually 404, but Google can’t crawl them to know”.

Finding fine-grained page indexing issues

In July of 2023, we gained more information. Google’s John Mueller explained it this way.

“…more fine-grained page indexing issue information is now available. As a result, you may see a rise in the portion of issues being reported on. This is not a change in the processing of your website for Search, it is purely in reporting.” – Data anomalies in Search Console ****

Common Indexing Questions About AMP Pages

Question: If I have installed AMP pages correctly, how long until they are indexed?

Answer: There is not a difference pace at which Google crawls and indexes AMP pages; crawling and indexing occurs at the same page as traditional desktop pages.

Tip: If you AMP pages are not being indexed or seem slow to be indexed, it may be that they are not validating correctly. Go back and examine them for errors using reports in the new Google Search Console. Valid code will ensure a more successful crawl rate of your AMP pages.

Question: Are some AMP page types indexed faster than others?

Answer: Whether it’s your company’s home pages or a page dedicated to a product’s details, nothing changes their flow. Google relies on a number of factors when assessing the optimal crawl frequency on a per-page basis, like how often a page’s primary content is updated.

Tip: The usual process begins with crawling in order for indexing and ranking to occur; submitting a page to be recrawled more frequently doesn’t impact its indexing or ranking.

Question: For AMP versions, should AMP category pages be indexed?

Answer: Google typically doesn’t recognize a product category page or product description page for search; however, a category page might prompt the chance to find new product description pages.

Tip: Google has warned that “dynamically generated product listing pages can easily become “infinite spaces” that make crawling harder than necessary”.

Question: If product pages update frequently, is this an indexing nightmare?

Answer: No. The URL of a product page type can remain consistent white them product item details can change frequently. Google understands that one-of-a-kind items are frequently sold. This includes antiques, auction items, custom art work, or classified ads that have a limited life-time.

Tip: Keep your sitemaps updated with new description details for each product page.

Question: Does crawling my AMP pages chunk into my crawl budget?

Answer: It does. With the effort to crawl all elements, including AMP, it is part of a server’s crawl budget and has value to identify and disclose issues on your site.

Tip: Few sites have cause for concern about the crawl budget; Google’s indicates

Tip: When today’s GoogleBot crawls a page, it must also fetch subresources. These typically include: JavaScript, CSS, PDF’s, images, and video clips to fully comprehend what’s included within the page. By keeping your JS and CSS support documents at a minimum, as the number of subresources is often a lot greater than the main document or visual files that help the reader. While your images may look terrific on the web, only if they follow current imgage guidelines can they be most effective.

“Google has built a method that allows marketers to work quickly on fixes and not waste time waiting for Google to recrawl the website, only to tell you later that it’s not fixed yet.” – MediaPost

“We’ve built a mechanism to allow you to iterate quickly on your fixes, and not waste time waiting for Google to recrawl your site, only to tell you later that it’s not fixed yet. Rather, we’ll provide on-the-spot testing of fixes and are automatically speeding up crawling once we see things are ok.” – John Mueller

What Could Cause my Site to Get Deindexed?

According to Google**, using one or more of the following SEO technical techniques may mean that your website ends up being deindexed:

- Robotically generated content.

- Partaking in link schemes.

- Devious redirects.

- Hidden links.

- Scraped and borrowed content.

- Participating in affiliate programs without adding sufficient value.

- Creating pages with malicious behavior, such as phishing or installing viruses, trojans, or other badware.

- Running visitors through splash or doorway pages.

- Abusing rich snippets schema code implementation.

- Setting up automated search queries to Google.

Instead of ranking your pages faster, Google may or will remove websites that use manipulative tactics from their search results. When you want to rank and get your website indexed fast on the search engine results page, do it the right way by adhering to Google Best Practices and using their robust set of tools. Conversly, web pages that adhere to Google’s Quality Rater Guidelines and offer unique and qaulity content are more likely to show up in featured snippets.

Getting clear about what’s involved with search engine indexing also incude, Bing, Yahoo and Yandex. But if Google sends you most of your web traffic, start with the what helps Google to index your web pages faster.

Owner of Hill Web Marketing,Jeannie Hill has years of experience and a well established reputation for positive results in organic search, search engine optimization, SEM, and PPC. I team with clients to help their businesses get the right web pages crawled faster. Expertise in Google’s SEO guidelines, diagnostics and website repair..

Owner of Hill Web Marketing,Jeannie Hill has years of experience and a well established reputation for positive results in organic search, search engine optimization, SEM, and PPC. I team with clients to help their businesses get the right web pages crawled faster. Expertise in Google’s SEO guidelines, diagnostics and website repair.. I enjoy residing in Minneapolis, Minnesota, where I provide digital marketing services to the metro area and beyond. Services for getting your site indexed faster typically start around $1,500.

Formerly, our Google Search Consoles didn’t provide a page-by-page breakdown of a site’s crawl stats with a handy list of indexed pages. To obtain the details necessary, many search engine optimization specialists had to study the server logs, use specialized tools and put in a ton of time. As Google’s Answer Engine advances so do its rich set of tools in the Search Console.

Google has indexing bugs from time to time. Currently it is fixing site name issues, so it’s important to follow updates. Google has also dropped the cache link from the search results the first of 2024.

Determining a site’s crawl budget and how to increase it is an easier SEO task when we can see internal links to them, crawl stats, and errors that prevent indexation. An Indexed Page List shortens the time for re-crawling web pages submitted to re-index.

After working so hard to curate content right for users and that amplifies your product, your effort could be in vain and you may miss connecting people to your site and your product. People who cannot visit your site if it is not indexed properly don’t have the chance to know what you sell.

SMMARY:

To have your website indexed faster by GoogleBot, we also suggest that businesses create an Organization profile page and engage in communities. As well, share your website link or share your link publicly by creating a post directly within your Google Business Listing. This is another way to get those new pages indexed, as Google follows such links very quickly.

We welcome your feedback about this post as well as your insights on any other information which might be useful to our readers on the topic of indexing websites.

Call 651-206-2410 for services that Leverage Your Google Search Console Reports

* https://www.linkedin.com/pulse/screenshots-from-new-index-coverage-report-google-search-glenn-gab

** https://support.google.com/webmasters/answer/35769

*** https://webmasters.googleblog.com/2017/08/a-sneak-peek-at-two-experimental.html

**** https://support.google.com/webmasters/answer/6211453?hl=en#zippy=%2Cpage-indexing-report